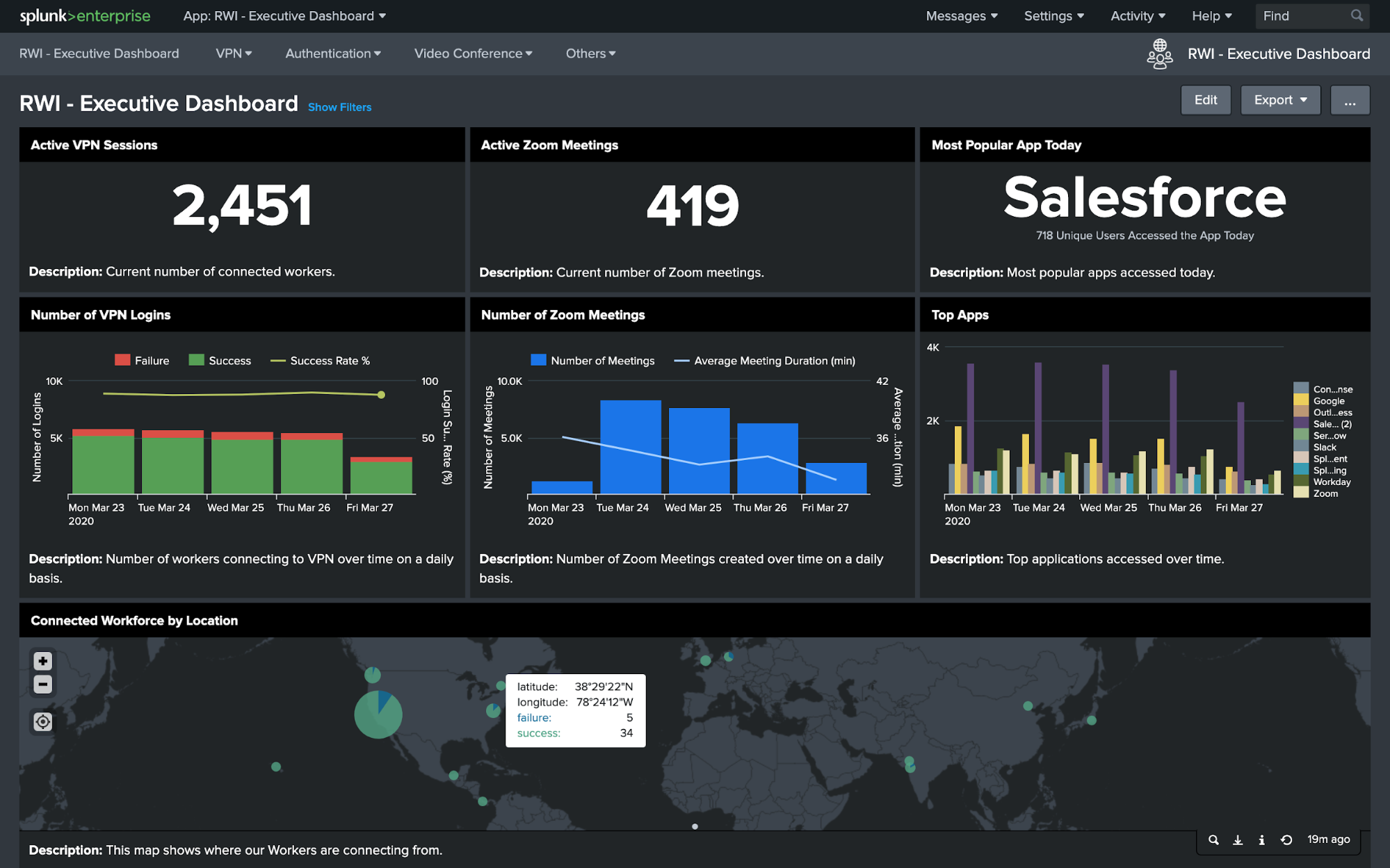

Splunk recently released Remote Work Insights - Executive Dashboards. An organization can create interesting dashboards by collecting data from tools like Zoom, Okta and the VPN software. This can enable executives to figure out how well their remote tools are working and where people are spending time.

For example you can create dashboards like

- Number of Zoom meetings active in the org at a time, classified by type of meeting (Scheduled meeting, personal meeting room, etc.)

- Duration of zoom meetings and a histogram of its duration - This can suggest how long are meetings going, how much time an individual is spending on meetings.

- Are meetings getting extended from their scheduled time - Can help executives figure out if time in meetings are being utilised well. Zoom meeting etiquettes training can be introduced to check that.

- Single Sign On systems like Okta can tell which apps are people using most. This could help executives detect which SaaS tools are actually being used and which are lying idle. This can help rationalise their SaaS spending.

- Integrating with VPNs can also tell number of people logged in via VPN, location from where they are logging in, etc.

Splunk Remote Insights - Dashboard

Now, Splunk had to face lots of flak because of this. People got enraged that this is a corporations trying to extract work from their employee, that too when everybody is struggling on their own. But if you look carefully, I am not sure that is the objective. They are not trying to monitor if people are doing their jobs. They are just trying to figure out if people have enough access to do the work, are they facing any difficulties, etc.

Splunk Remote Insights - Dashboard

Now, Splunk had to face lots of flak because of this. People got enraged that this is a corporations trying to extract work from their employee, that too when everybody is struggling on their own. But if you look carefully, I am not sure that is the objective. They are not trying to monitor if people are doing their jobs. They are just trying to figure out if people have enough access to do the work, are they facing any difficulties, etc.

It is very similar to how you monitor an app or service. Remote working tools like Zoom, VPNs, etc. are providing a service. How do we figure out if they are working well for the employees? If certain location shows high number of failures in VPN logging, there might be some issue with the VPN provider or network of that area. If people are spending too much time on meetings, then there needs to be some sort of training to be more productive over Zoom.

Since, this is a new experience for many teams, monitoring the tools more closely only helps to figure out issues and solve it.

This is what I call "Tools Observability" and we get more remote friendly, this will get more important.

Screenshots from SigNoz - Monitoring applications

While this seems like a very specific use case for monitoring software, the underlying constructs are more universal and applicable in many other domains. It's just that these techniques are first being applied on software monitoring.

Screenshots from SigNoz - Monitoring applications

While this seems like a very specific use case for monitoring software, the underlying constructs are more universal and applicable in many other domains. It's just that these techniques are first being applied on software monitoring. Business Processes like Delivery should be monitored

Monitoring is a much broader concept than we realize today. Rather than just software stacks, we can monitor business metrics, we can monitor utilization of resources in any factory. This is what control systems was when factories were new things. Researchers would devise new & ingenuous way to monitor different machines & components in the factory. Any process within an organization can be monitored in a continuous way.

Business Processes like Delivery should be monitored

Monitoring is a much broader concept than we realize today. Rather than just software stacks, we can monitor business metrics, we can monitor utilization of resources in any factory. This is what control systems was when factories were new things. Researchers would devise new & ingenuous way to monitor different machines & components in the factory. Any process within an organization can be monitored in a continuous way.